With just a few days left to final submission and presentation, our AI Empowered Design projects are finally coming together in full.

On the live video abstraction project side, we’ve conceptualized a system by the name of A.L.I.V.E.: Automated Live Video Extraction. Admittedly, the title is rather contrived, but it wouldn’t truly be an exchange program if we didn’t impart some forced acronyms in true Singaporean fashion to Zhejiang University.

For those of you who haven’t been following along with happenings of theme one, here’s a little more background on our project. A few years ago, Alibaba launched a live-streaming system on their online e-commerce platform, Taobao. This service allows Taobao merchants and other third-party streamers to broadcast product reviews and recommendations on their channels. It quickly exploded in popularity, and proved to be a remarkably effective tool for emptying the pockets of customers; last year, the sales volume from Taobao Live exceeded ¥100 billion (S$20 billion). The efficacy of this platform is such that Taobao has launched a live-streamer program that provides professional training for streamers.

What we aim to do, then, is to package the clear benefits of Taobao’s live-streaming platform into more focused and targeted snippets. Taobao Live streams can, and regularly do, run for periods of eight hours or more. Much of this can end up being a waste of valuable stream time on products that may not interest specific consumers. By extracting the most relevant parts of full-length live-streams, we can translate these streams into more digestible bits of information, while retaining the advantages of live-streaming.

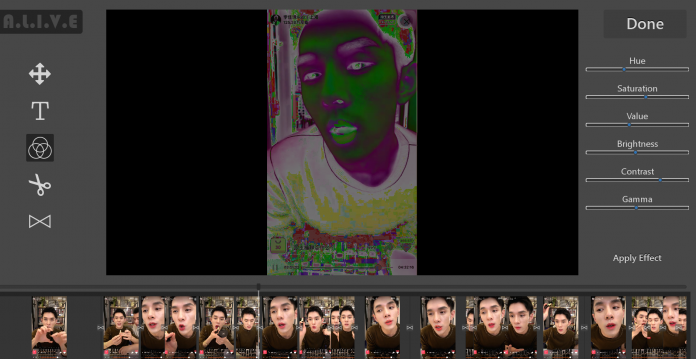

On my part, I’ve been working on building a user interface for our project. Our method for automating the video extraction process consists of four stages: stages one to three are completely automated, while the last stage allows users to manually edit the computer-generated video extract. This means that I’ve also had to create a functional video editor in the user interface. As the entire program is written in Python, using Pygame to obtain a low-level graphical user interface capability, this turned out to be a rather tedious task – about 1500 lines of code were required (the longest single application I’ve written so far).

I shan’t divulge too much more on the details of this application, as we have yet to present our project (and it’s nice to keep a bit of mystery). But for now, it’s back to work for me.