Continued from Part I. This section explains my design thinking, design choices, and how I moved beyond the first prototype.

1.2 Cascade.exe

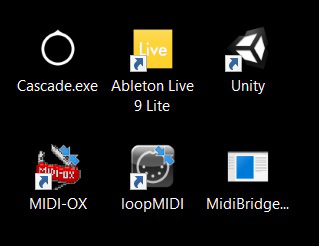

With valuable insights on what worked and didn’t from the initial Python prototype, I decided to build Cascade as a standalone desktop application. Envisioning a clean and intuitive 2D interface, Unity was chosen as the engine for its speed in developing the interface needed. Almost all the scripting was done in C#, using Unity’s API. It was a steep learning curve to switch from the syntactically simpler Python, but the ease of development showed with the availability of functions like co-routines and quaternion calculation provided in the API.

Developing the control system involved a deep understanding of quaternions and rotations, as each gyroscope update was stored as a quaternion. Quaternions are used to avoid the problem of gimbal lock, which happens when one degree of freedom is lost when two Euler axes are aligned. Quaternions are stored as a four-dimensional vector, and have four parameters (w, x, y, z). I used quaternions to translate the gyroscope data into a vector form projectible on a 2D plane for horizontal and vertical movement on screen.

Cascade requires a virtual MIDI port, provided by loopMIDI, replacing a physical cable to send information from the port to the DAW.

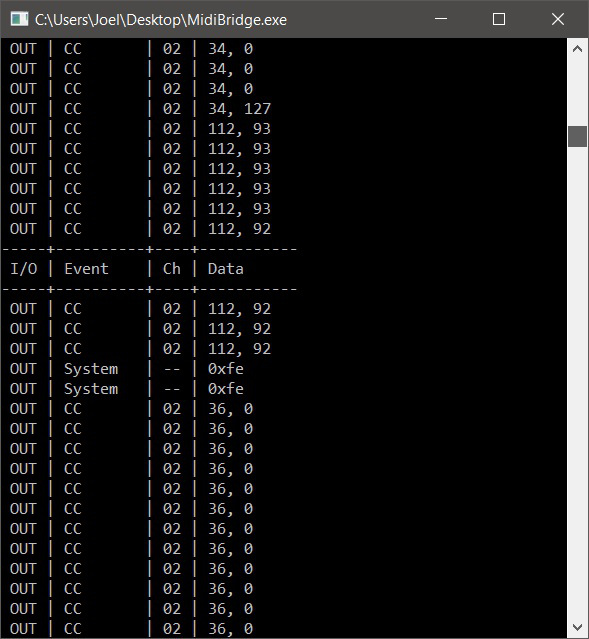

In order to broadcast MIDI messages on the virtual port, I used a console executable, MidiBridge, written by Keijiro Takahashi, a developer that works with also Unity and music production.

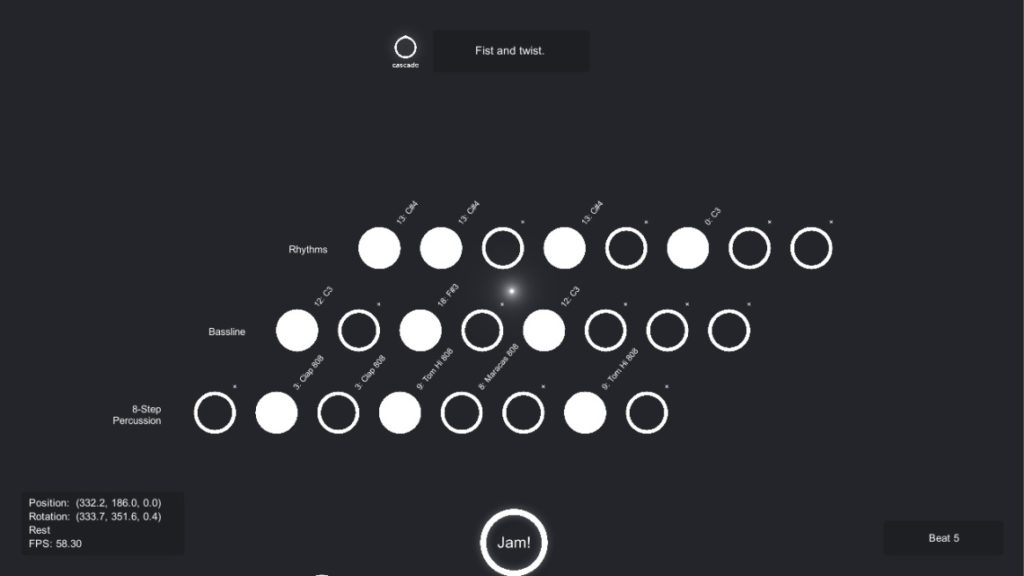

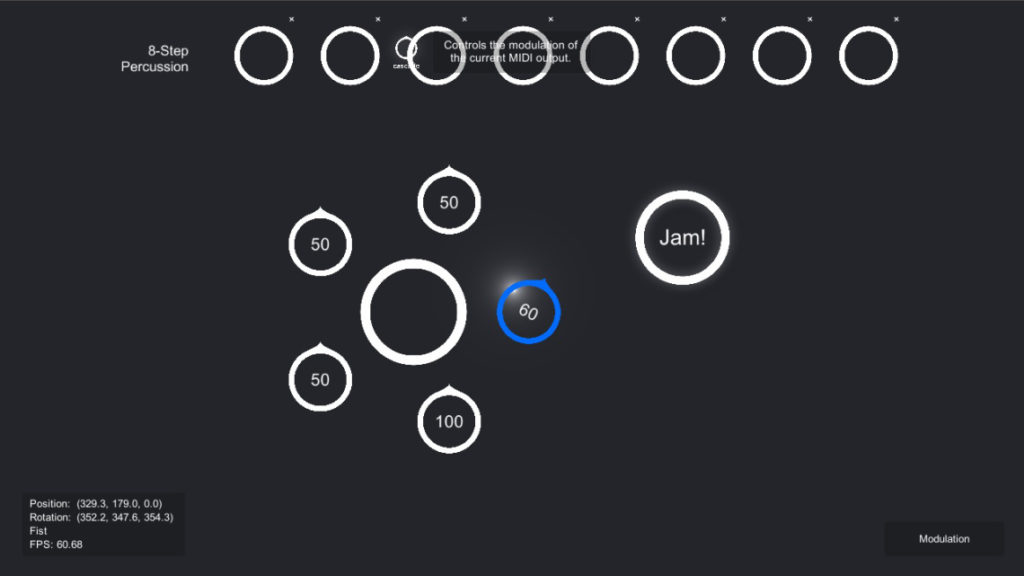

The available interface space is divided into two main sections, the sequencer section, which features three levels: an 8-step drum sequencer, bassline sequencer and rhythm section sequencer. The user can toggle each beat on/off by gesturing a fist, and change the note or drum selection by making a slight arm rotation while maintaining the fist gesture.

The second section contains an effects module, which is a radial menu node with five assignable controls. The default controls are volume, pan, modulation, tempo and a bandpass filter. The second section also contains a play/stop node. To start the track, a simple spread-fingered gesture is made while hovering on the node, and a fist gesture will stop the track.

1.3 EMG to Music

Cascade also has the capability of translating raw EMG data to music. Raw EMG data is represented as an array of integers, each index containing the EMG value of the output from the EMG sensors on one of the eight available channels. Given the stream of incoming arrays, a EMG value – time plot can show the type of signal coming in. Given the volatility of EMG signals and the ambiguity of where the signal changes originate from, it is possible to stabilise and/or smooth the signals using a Fourier transform, but a simpler interval sampling method was used. The EMG value is recorded at every specified point in time t, t+n, t+2n. The average between the current value and the previous two values are then calculated, then passed into a scaling function to determine an integer value between 0 and 127. This can be then sent through MIDI Bridge to Ableton Live either as a control message, or a pitch value. However, using EMG data to modify parameters like continuous controllers is not advisable because the values are likely not replicable, nor are they accurate. Similarly, making a musical pitch change through flexing your forearm cannot be replicated accurately, but it is far more forgiving when filtered through a simple scale, say, minor pentatonic when playing over a sequenced track. The rate at which the EMG data is streamed is 200Hz, which is too high a resolution for any sensible musical pitch change. Therefore, the sampling rate for Cascade has to be far lower, which leads to a perceived phenomenon of noticeable latency and unresponsiveness when converted to audio output.

The last post can be found here.