Interfacing Gestural Control for Musical Performance

Full post on my personal blog.

Abstract

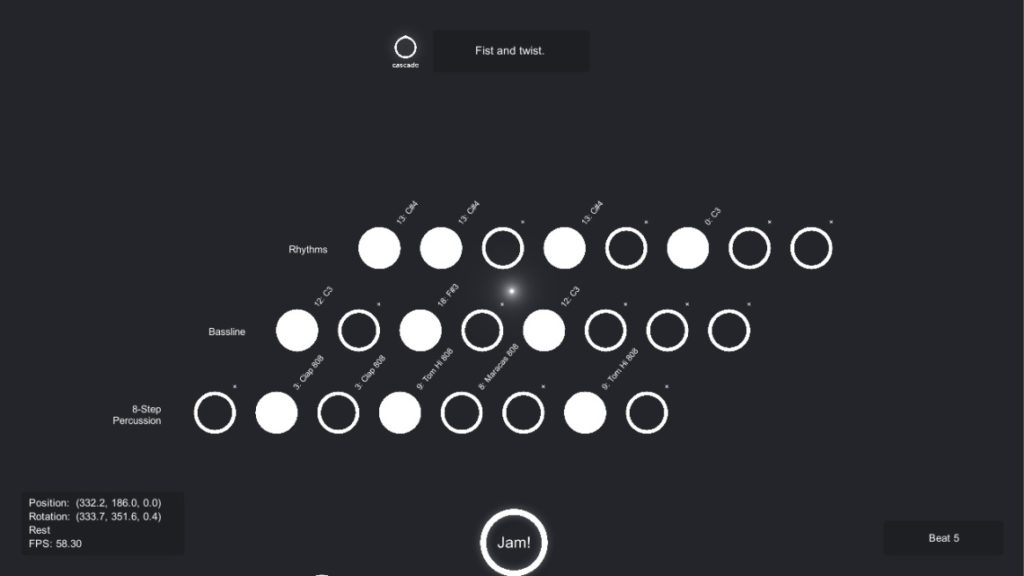

Thalmic Lab’s Myo is an all-in-one gesture control armband equipped with 8 electromyographic (EMG) sensors and an inertial measurement unit (IMU) with 9 degrees of freedom, using an on-board accelerometer, gyroscope and magnetometer. This data is sent over Bluetooth via a USB dongle. In the NIME (New Interfaces for Musical Expression) community, EMG and IMU input have been harnessed for decades for both studio recording and live performances. With Myo, Thalmic has made the power of EMG and IMU available, in a single wearable, to the average consumer at a reasonable price. Cascade aims to bridge the gap between the hardware genius of Myo and the software complexity of modern-day digital audio workstations (DAWs), like Ableton Live and FL Studio, by providing a clean and intuitive interface to enable the transformation of physical motion to musical output.

1. Design Process

Much thought went into each level of design in Cascade. The major design considerations were intuitive control, ease of learning for beginners, and a final audio output not dissimilar to that of traditional or commercial MIDI controllers.

1.1 Prototyping

One of the key design decisions for Cascade came very early on: the choice between Open Sound Control (OSC) and Musical Instrument Digital Interface (MIDI) for the sending of digital musical data. MIDI was chosen despite its many flaws because of adequate back-end support and widespread usage to ensure compatibility with most DAWs and synthesizers. MIDI messages are event based, and can be sent on different channels. For musical notes, the data bytes include an on/off command, the channel number, the pitch value and the velocity value. For system messages and continuous controllers (volume, filters, pan, etc.), the data bytes include the channel number, controller ID value and controller change value.

The first prototype was more in the likeness of an actual musical instrument. It linearly translated the current Tait-Bryan angles (pitch, yaw and roll) of the forearm each into a different musical parameter. It was created in Python, using the rtmidi C++ library, which provides a common application programming interface (API) for realtime MIDI input and output. The MIDI messages were then sent directly to Ableton Live, a popular DAW, with a MIDI instrument selected.

For example, in the first iteration, the yaw (horizontal) movement of the forearm would control MIDI notes over two octaves (25 semitones), the pitch (vertical) attitude of the forearm would scale the volume parameter between 0 and 127, and the roll (rotation) of the arm would control a simple band-pass filter. Since all the notes would play from the lowest to highest in a chromatic fashion, a scale filter was added to limit the notes played to a musical scale (say, Hirajōshi or major pentatonic).

After testing, it was apparent that the use of IMU data to control musical pitch was a poor idea, as the user had no idea what notes were playing, nor did the user have adequate control over which notes to play.

One of the most serious problems with real-time control using Tait-Bryan angles was that the human forearm is not pivoted at the centre, nor is the Myo rotated at the centre of its IMU. This causes interaction between the angles that makes it hard to separate one dimension of control from another. For example, if the arm is waved to the right near the limit of the range of motion (positive yaw), the arm tends to elevate and rotate clockwise (positive pitch, positive roll). In the first prototype, this would translate to an increase in volume and a higher frequency for the filter, while only an increase in pitch was intended.

Despite all the initial problems, creating audio output from physical motion was a novel, interesting experience even though the sound had no real structure or emotion to it.

This post is continued here.